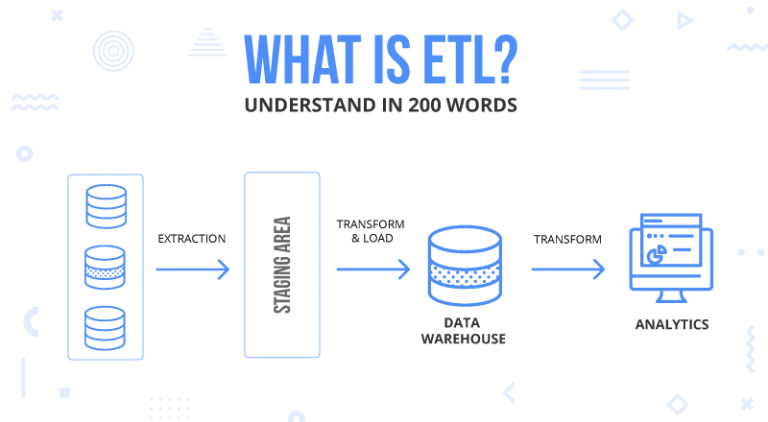

The specific business use case determines the choice of processing. Streaming ETL, also called real-time ETL or stream processing is an alternative in which information is ingested by the data pipeline as soon as it's made available by the data source system. Batch loading is when the ETL software extracts batches of data from a source system, usually based on a schedule (every hour for example). This is achieved using batch or stream loading. Data can be loaded all at once (full load) or at scheduled intervals (incremental load). The final step in data integration is to load the transformed, correctly formatted data in the data warehouse. Transformations are thus mostly dictated by the specific needs of analysts who are seeking to solve a precise business problem with data. For example, ETL can combine name, place, and pricing data used in business operations with transactional data - such as retail sales or healthcare claims, if that's the structure end-users need to conduct data analysis. A lot of the time, transactional data is integrated with operational data, which makes it useful for data analysis and business intelligence. At the transformation stage, data is also structured and reformatted to make it fit for its particular business purpose.Part of the transformation consists in improving its quality: cleansing invalid data, removing duplicates, standardizing units of measurements, organizing data according to its type, etc. In its original source system, data is usually messy and thus challenging to interpret.There are two parts to making data fit for purpose. The second step in data integration consists in transforming data, putting it in the right format to make it fit for analysis. This allows syncs to be rolled back and resumed as needed. Finally, a staging area is useful when there are issues with loading data into the centralized database.It avoids performing extractions and transformations simultaneously, which also overburdens data sources.The staging area allows for the possibility to bring data together at different times, a way not to overwhelm data sources. It's usually impossible to simultaneously extract all the data from all the source systems.The use of the staging area is the following: Data pipelines themselves are a subset of the data infrastructure, the layer supporting data orchestration, management, and consumption across an organization. ETL pipelines are data pipelines that have a very specific role: extract data from its source system/database, transform it, and load it in the data warehouse, which is a centralized database. The ETL process consists of pooling data from these disparate sources to build a unique source of truth: the data warehouse. Today, organizations collect data from multiple different business source systems: Cloud applications, CRM systems, files, etc. This article seeks to bring clarity on how this process is conducted, how ETL tools have evolved, and the best tools available for your organization today. ETL plays a central role in this quest: it is the process of turning raw, messy data into clean, fresh, and reliable data from which business insights can be derived.

The story is still the same: businesses have a sea of data at disposition, and making sense of this data fuels business performance. ETL (Extract-Transform-Load) is the most widespread approach to data integration, the practice of consolidating data from disparate source systems with the aim of improving access to data.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed